The End of Pure SaaS in GTM Tech?

Why AI sales tools are falling short, where the implementation gap actually is, and why the future of GTM software looks more like Palantir than Salesforce.

Most AI-native sales tool looks identical: identify signals, scrape LinkedIn, write an email – and every single one fails in production for the same reason.

These tools are flooding the market with promises to automate prospecting, account scoring, outbound, forecasting, and CRM updates. The reality: pilot fatigue, low rep adoption, poor results, and the perception that AI is nothing more than a research tool.

The problem isn’t the software. It’s that we’re trying to solve a data engineering problem with a UX layer.

The “GTM Engineer” Is a Powerful Wedge – Not the End State

The “GTM Engineer” represents real progress – technical operators who use tools like Clay, n8n and Claude Code to achieve the results of 5 reps. But the role is being sold as the final evolution of sales ops, when it’s really just the next phase.

GTM Engineers excel at building outbound engines and data pipelines, but this works best at organizations when demand outweighs capacity, in other words, when volume beats precision. This is most common today at early-stage and AI-native startups.

As markets mature and these companies grow into their demand, the “bar” to generate pipeline rises and revenue teams will need to shift to account-based selling (‘ABS’) to continue scaling.

The bottleneck shifts from the engineer building systems to the rep responsible for driving and executing a territory strategy. At this point “more touches” isn’t the problem – context is.

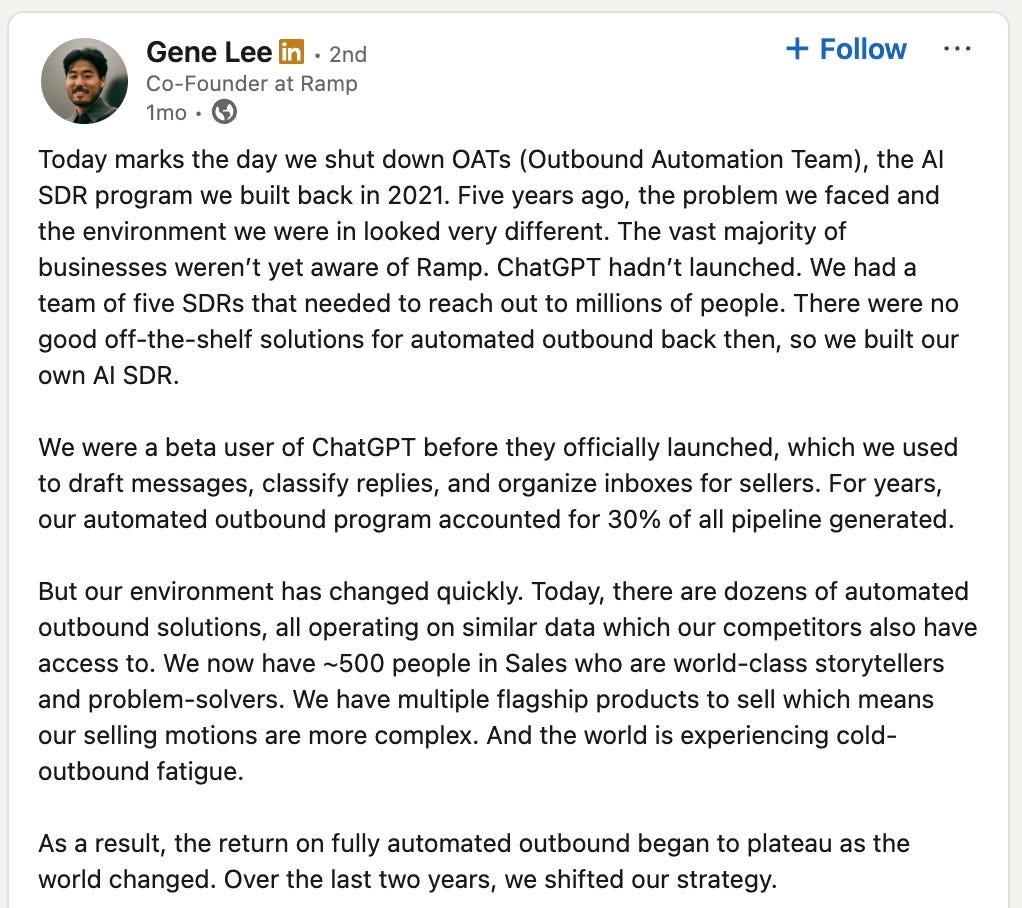

This recently played out with Ramp sunsetting its Outbound Automation Teams.

What Ramp discovered is that ‘more touches’ stops working when your market knows who you are. The playbook that generated 30% of pipeline in 2022-23 hit diminishing returns in 2024-25:

As Ramp matured in a crowded market, generating new conversations became harder and humans became a requirement because they could pull from unstructured context.

Quote from Gene Lee, Ramp’s co-founder:

“There are dozens of automated outbound solutions, all operating on similar data which our competitors also have access to… We have multiple flagship products to sell which means our selling motions are more complex. And the world is experiencing cold-outbound fatigue. As a result, the return on fully automated outbound began to plateau as the world changed. Over the last two years, we shifted our strategy.”

See the full post: here.

In other words, it required a level of context and strategy that automation couldn’t provide.

The AI Adoption Challenge

We’ve spent the last year piloting various AI tools at Grafana Labs. On paper, they look great. They can scrape LinkedIn, read a 10-K, and draft “personalized” emails in seconds.

And yet, in almost every case, I’m struggling to get more value than I do directly from Gemini or ChatGPT.

This is because, as a seller, I’m thinking through all of this when I approach an account:

What’s happening in this account right now

What technologies are they using and what’s our confidence interval

What we’ve tried before and why it didn’t stick

What nuggets can I find in Slack, Gong calls, CRM or support tickets

Who’s important and what do they care about

Do we have mutual connections or overlapping investors/board members

What’s my best hypothesis based on all of this

AI GTM tools have external signals, an overview of our business and access to a few CRM fields, but they don’t have the internal context required to be effective in complex selling environments.

In practice, the tool becomes another tab I have to sanity-check – so it doesn’t save time, it shifts effort into QA. Reps look at the tool and think: “This is fine, but I can do better… and faster… because I actually know what’s going on.” And they revert back to manual.

Building the “Context Layer”

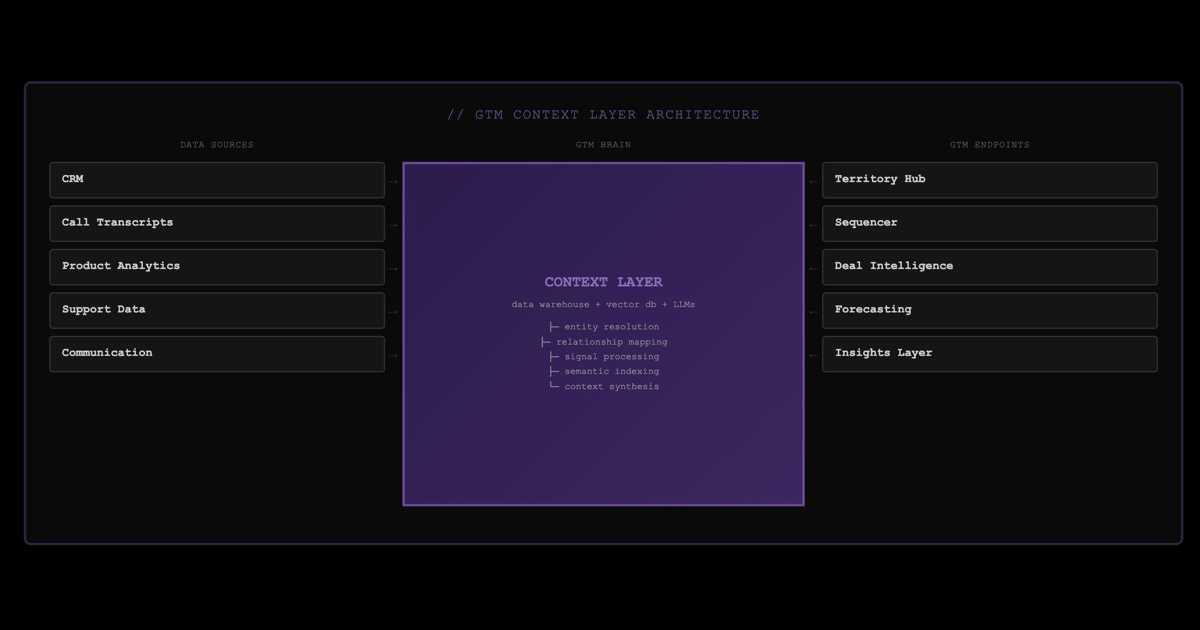

This leads us to the main requirement for the future of GTM: The organization-wide “Context Layer”. We need a “Brain” that connects every piece of tribal and digital data into a single, queryable source. This brain should power every tool and “outcome” of the GTM tech stack:

CRM (data hygiene, updates)

Prospecting (account and contact identification, ranking)

Account hypothesis

Email personalization and sequencing

Deal strategy

Forecasting

The difference between a tool with external signals and one with full organizational context isn’t incremental, it’s transformational.

Take “Account hypothesis” as an example. A generic AI tool might say: ‘They just posted a DevOps Engineer job that references observability tooling – good timing for outreach.’

A context-aware GTM tool would say:

‘They posted a DevOps role that references Datadog. Their new VP Engineering came from a customer – and she’s connected to our Director of Sales Engineering on LinkedIn. On a recent cold call a DevOps Engineer mentioned “visibility gaps in microservices” due to budget limitations. Recommended play: Warm intro through our Sales Engineering Director, position as the cost-effective alternative with a message acknowledging the VP’s Grafana experience.’

The SaaS Gap: Why “Total Context” is a Services Problem

Bridging the “Context Gap” isn’t a feature you can ship – it’s a data engineering project.

It requires connecting systems like data warehouses, vector databases, and unstructured data into a unified, queryable layer that understands relationships between accounts, people, deals, and signals. This is why services are non-negotiable: you cannot self-serve your way into a GTM Brain.

You can see this struggle playing out in real-time with the industry’s biggest player: Salesforce.

With the launch of Agentforce, Salesforce attempted to solve the GTM problem by promising “autonomous agents” that could be deployed across the enterprise with a few clicks. In theory, the ultimate AI solution. In reality, it has faced significant headwinds. As of early 2026, adoption remains sluggish, and many pilots have stalled.

Agentforce’s struggle in the market validates this thesis. Salesforce built world-class AI infrastructure, but even with their resources, they’ve hit the same wall: you can’t ship organizational context as a feature.

Salesforce built a powerful technical engine, but they underestimated the difficulties of addressing the “Context Gap”.

Reports from the field suggest that Agentforce is less effective when it lacks the total context required for seller-level execution. The agent can see the CRM and Slack, but not historical deal patterns, product usage, support history, call transcripts or social connections, and as a result, struggles with nuance.

To Salesforce’s credit, they’ve acknowledged this publicly – their implementation guidance now explicitly states that Agentforce requires ‘clean data, unified context, and workflow mapping’ before deployment.

In other words: services.

The Palantir Parallel: Forward Deployed GTM

Once you accept that GTM transformation is a data engineering problem disguised as a sales problem, you realize that the traditional SaaS model is insufficient. We need a new model: Software-led services.

To understand what this looks like in practice, we have to look at Palantir.

Palantir has proprietary software. But they don’t “sell software” in the conventional SaaS sense. They sell transformations – outcomes that are only possible because they pair the platform with forward-deployed engineers (‘FDEs’) who:

Live inside their customer’s environment

Integrate messy data

Configure workflows

Build bespoke logic

Embed into the customer’s operating reality

Make the tool usable in context, not just in theory

Critically, Palantir charges for this. Their FDEs aren’t ‘customer success reps’ – they’re embedded engineers billed as part of the contract. The software and the implementation are one offering.

This model exists because there’s a ceiling to what generic software can do inside highly variable, high-stakes environments like government, defense, and intelligence (and ironically, enterprise sales).

This is the model GTM software needs to adopt. It’s the only model that actually works when every company’s revenue motion is a snowflake – different ICP, different product wedges, different sales cycles, different data quality.

The GTM software companies that win will start to look like software + forward-deployed RevOps/GTM Engineering. These vendors will provide the software, but they’ll also provide the resources to connect the organizational context that is fragmented across systems, inconsistent, and mostly unstructured.

My Thesis For the Next Five Years of GTM Software

The context layer becomes the “oil” of go-to-market technology. It’s what everything runs on – but it requires extraction, refinement, and infrastructure to be useful.

Every downstream workflow needs the same core capability: a shared “brain” that holds external and organization-wide context and can apply it to specific GTM actions.

To be effective, that brain has to address the “Context Gap” by integrating:

Internal data (CRM, engagement data, product usage, billing, support)

Unstructured knowledge (call transcripts, internal messages, notes, docs, emails)

External signals (company and contact details, initiatives, relevant activity, tech stack)

Once you have that context layer, AI generates measurable ROI: faster deal cycles, higher win rates, and predictable pipeline – because the foundational models finally have the raw material needed to be useful.

Wrapping Up

The era of “pure SaaS” in GTM is coming to an end.

As software becomes a commodity, the focus is shifting back to data engineering, tribal knowledge, and the systems integration that actually makes a seller effective.

If you’re a GTM software founder, your moat isn’t your UI; it’s your ability to ingest context. If you’re a sales or RevOps leader, your job is to establish an internal “Context Layer” as fast as you can.

-Cam Wright

P.S. - if you enjoyed this article, feel free to leave a “like”, “comment” or “subscribe”. I read every comment and will make sure I get back to you.

Great stuff as usual Cam.